Airflow pipelines retrieve centrally-managed connections information by specifying the relevant conn_id.

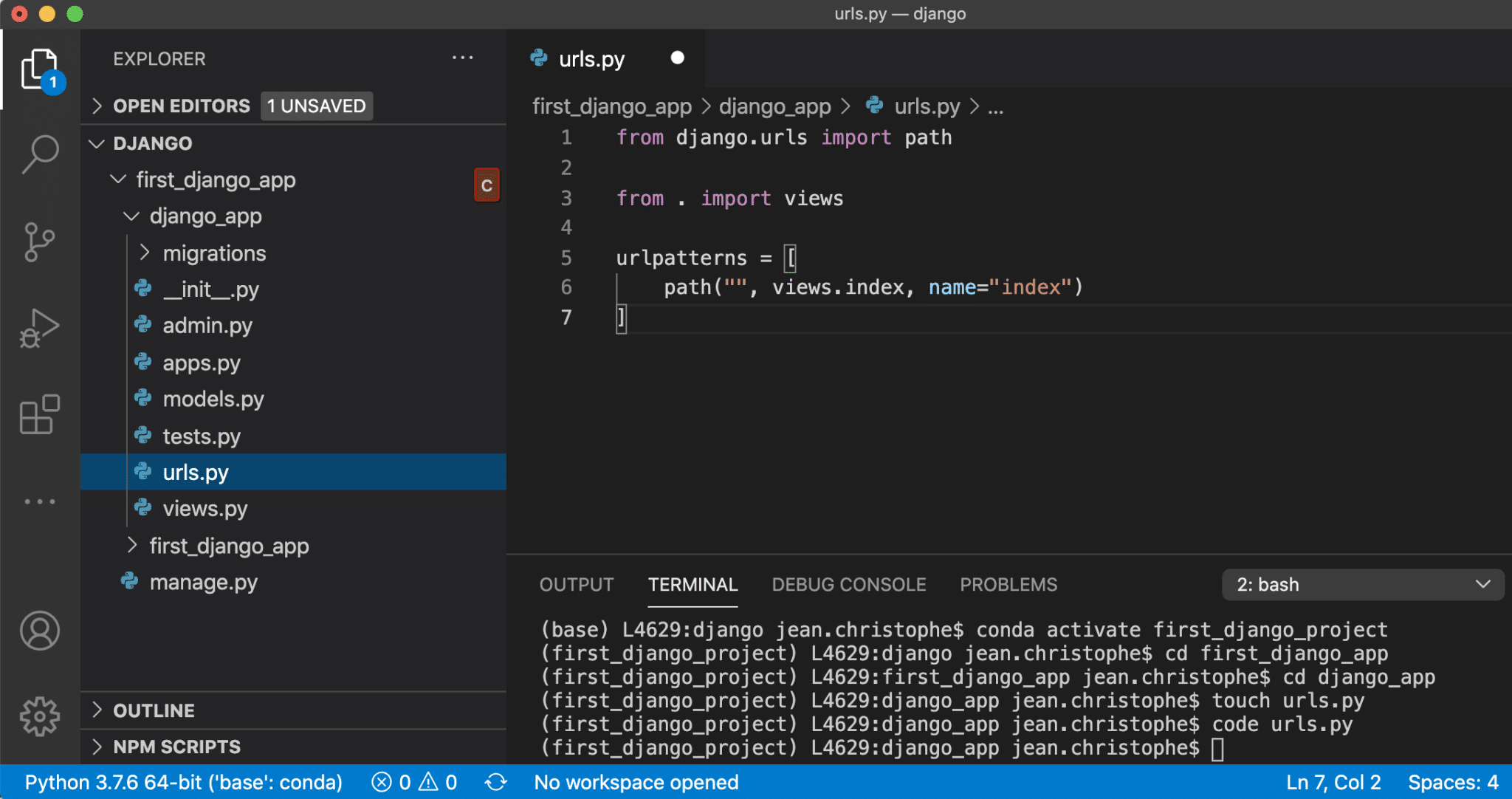

A good default is at least 480,000 iterations, which is what Django. The practitioners now just start with a dbt project. Airflow, especially with a Kubernetes deployment, feels unnecessarily complex for a lot of data teams. A conn_id is defined there, and hostname / login / password / schema information attached to it. The iteration count used should be adjusted to be as high as your server can tolerate. Each functional sub-DAG of a typical Airflow DAG is now a specialized product: EL, T, reverse-ETL, data apps, metrics layer. The information needed to connect to external systems is stored in the Airflow metastore database. Hooks implement a common interface when possible, and act as a building block for operators.Īirflow pools can be used to limit the execution parallelism on arbitrary sets of tasks. Hooks are interfaces to external platforms and databases like Hive, S3, MySQL, Postgres, HDFS, and Pig. Jinja is a modern and designer-friendly templating language for Python, modelled after Django’s templates. How to work with Django models inside Airflow tasks According to official Airflow documentation, Airflow provides hooks for interaction with databases (like MySqlHook / PostgresHook / etc) that can be later used in Operators for row query execution. I also can confirm just by cd ing into the dir that the directory structure is as posted in the question. The logical date and time for a DAG Run and its Task Instances. However I can confirm that airflow is the username and airflow/airflow is the install dir, so at least that part is not the issue. I am using Sensor to monitor my Airbyte sync as Sensors do not occupy an Airflow worker slot, so this helps reduce Airflow load. Each task is an implementation of an Operator.Īn operator describes a single task in a workflow.Īn Operator that waits (polls) for a certain time, file, database row, S3 key, etc.Īn instance of a task - that has been assigned to a DAG and has a state associated with a specific DAG run (i.e for a specific execution_date).

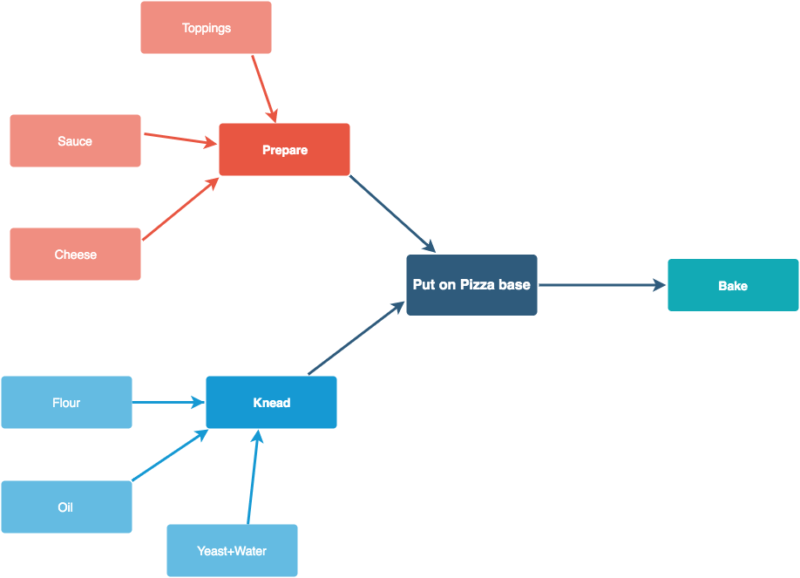

A Task defines a unit of work within a DAG it is represented as a node in the DAG graph, and it is written in Python. Created by the Airflow scheduler or an external trigger. An instance of a DAG, containing task instances that run for a specific execution_date. Airflow looks in your DAGS_FOLDER for modules that contain DAG objects in their GLOBAL NAMESPACE and adds the objects it finds in the DagBag. Apache Airflow + Django It’s complicated Airflow is designed to be a framework by itself since it has different strategies for the generation of DAGs and a web interface that works on Flask. The folder where airflow pipelines live. Collection of tasks, their dependencies and settings.įeature for cross communication between tasks.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed